Kubernetes has become one of the most widely used container orchestration systems for scaling, managing, and automating the deployment of containerized apps in distributed environments. As you know, more and more apps now rely on microservices and containers. According to a 2022 survey by the Cloud Native Computing Foundation (CNCF), 44% of respondents have admitted to using containers for almost all apps and business segments.

Table of Contents

Although Kubernetes needs no introduction for software developers, and its potential to simplify app development with increased resource utilization isn’t hidden for software development companies, it is a complex system with its own set of challenges. So, what exactly is Kubernetes? What issues is it trying to solve? How does it help development teams?

If you’re new to Kubernetes, it is obvious that you get confused and feel lost with the overwhelming information available on the internet. Well! You need not worry, as we present a beginner’s guide to understanding this container orchestration platform, its architecture, components, fundamentals, and more.

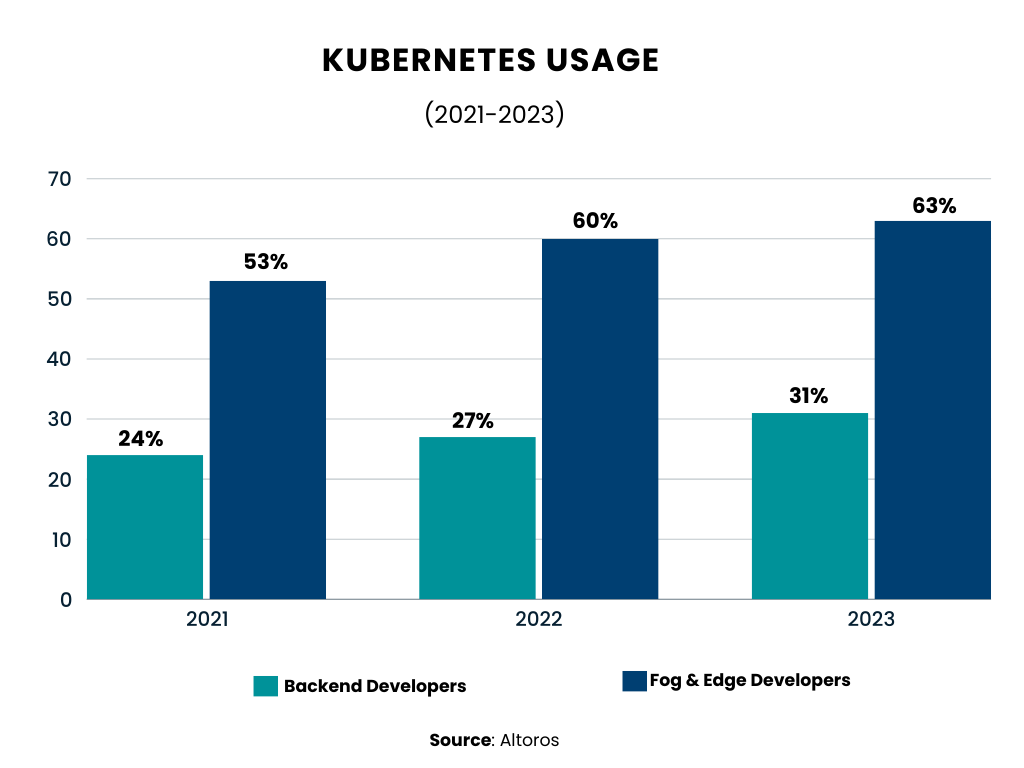

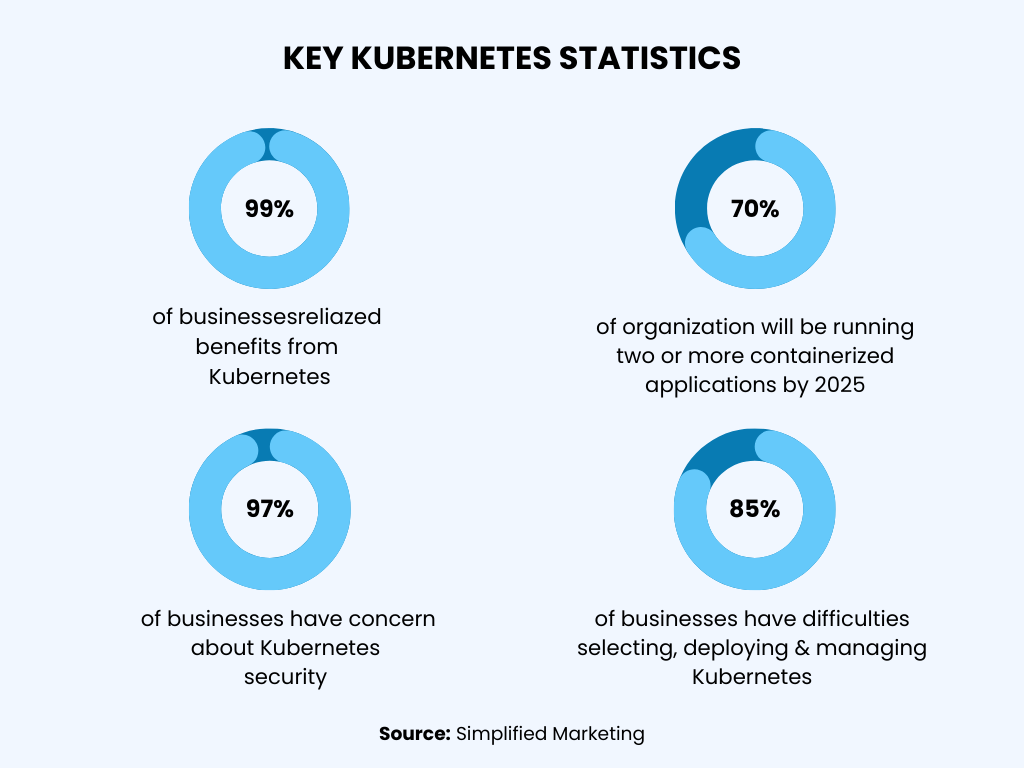

Surprising Stats on Kubernetes You Should Know

- According to Red Hat’s State of Enterprise Open Source 2022 report, nearly 68% of businesses are already running containers. (Source: EnterprisersProject)

- 70% of IT leaders have admitted that their organization is using Kubernetes. (Source: EnterprisersProject)

- There are over 280 certified Kubernetes service providers, up from 251 in 2022. (Source: EnterprisersProject)

- There are 3,249 Kubernetes contributors to the GitHub repository. (Source: EnterprisersProject)

What is Kubernetes?

Kubernetes, often referred to as K8s or simply “kube,” is an open-source system that streamlines the deployment, management, and scaling of containerized applications. It acts as an orchestration platform, automating many manual tasks in handling containers.

Kubernetes groups the containers that constitute an application into logical units, making them easier to manage and discover. It builds upon Google’s 15 years of experience running production workloads and incorporates best practices from the community.

Designed based on the principles that allow Google to handle billions of containers weekly, this container orchestration platform can scale without requiring a significant increase in your operations team.

How Does Kubernetes Work?

Kubernetes operates on top of a regular operating system, like Linux or Windows, and manages a cluster of machines. This cluster can consist of worker machines called nodes and a manager called a control plane.

It creates a well-oiled system by efficiently scheduling containers to run on these machines. It considers the resources each container needs and the available power of each machine to make sure everything runs smoothly. This organization of clusters and their storage is what we call orchestration.

What is Kubernetes Used for?

Kubernetes is a powerful container orchestration tool that automates and manages cloud-native containerized applications.

Let’s explore some of the compelling use cases for Kubernetes:

Large-Scale App Deployment

Kubernetes excels at handling large applications. Its automation capabilities, declarative configuration approach, and features like horizontal pod scaling and load balancing allow developers to set up systems with minimal downtimes.

During unpredictable moments (such as traffic surges or hardware defects), this container orchestration platform ensures that everything remains up and running.

For instance, platforms like Glimpse use Kubernetes alongside cloud-based services (such as Kube Prometheus Stack, Tiller, and EFK Stack) to organize cluster monitoring.

Managing Microservices

Many modern applications adopt a microservices architecture to simplify code management. They are separate functions that communicate with each other.

Kubernetes provides the necessary tools to manage microservices, including fault tolerance, load balancing, and service discovery.

Hybrid and Multicloud Deployments

Kubernetes allows businesses to extend on-premises workloads into the cloud and across multiple clouds. Hosting nodes in different clouds and availability zones increases resiliency and provides flexibility in choosing service configurations.

Expanding PaaS Options

Kubernetes can support serverless workloads, potentially leading to new types of platform-as-a-service (PaaS) options. Its benefits include improved scalability, reliability, granular billing, and lower costs.

Simplifying Data-Intensive Workloads

Google Cloud Dataproc for Kubernetes enables running Apache Spark jobs, which are large-scale data analytics applications.

Want to Hire Website developers for your Project ?

Edge Computing Extension

Organizations running Kubernetes in data centers and clouds can extend their capabilities to edge computing environments. This includes small server farms outside traditional data centers or industrial IoT models.

This container orchestration platform helps maintain, deploy, and manage edge components alongside application components in the data center.

Microservices Architecture

Kubernetes frequently hosts microservices-based systems, providing essential tools for managing fault tolerance, load balancing, and service discovery.

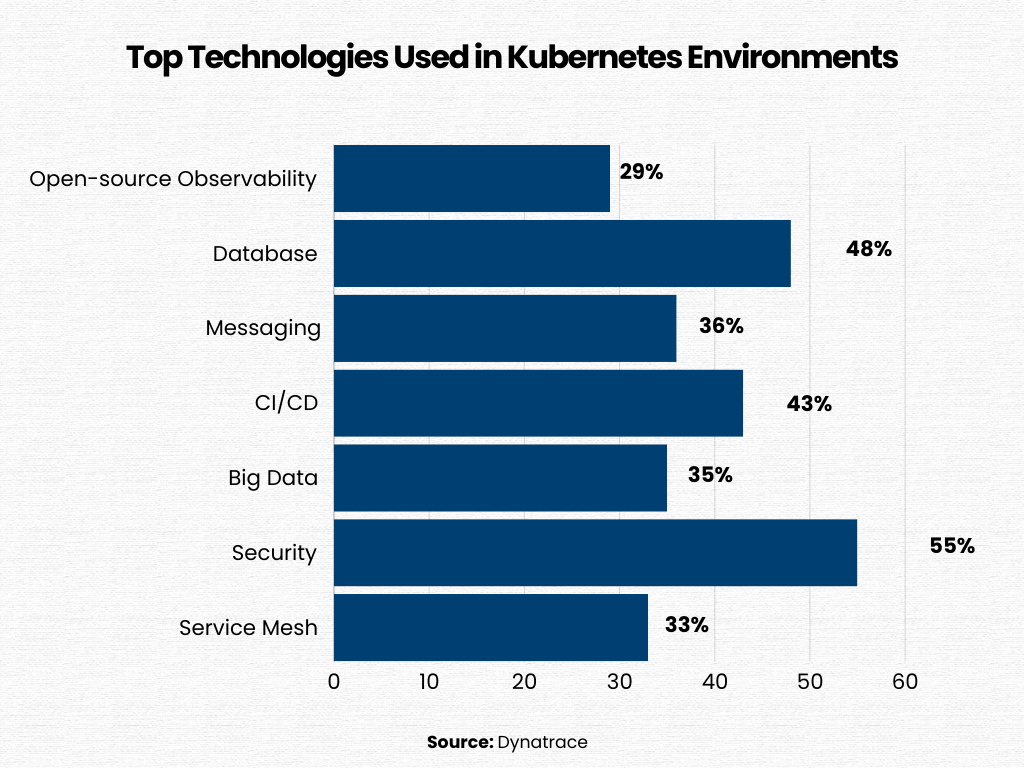

DevOps Practices

Kubernetes plays a crucial role in DevOps practices by enabling quick development, deployment, and scaling of applications.

Its support for continuous integration/continuous development pipelines facilitates faster and more effective software delivery.

Want to Mobile App Development for your Project ?

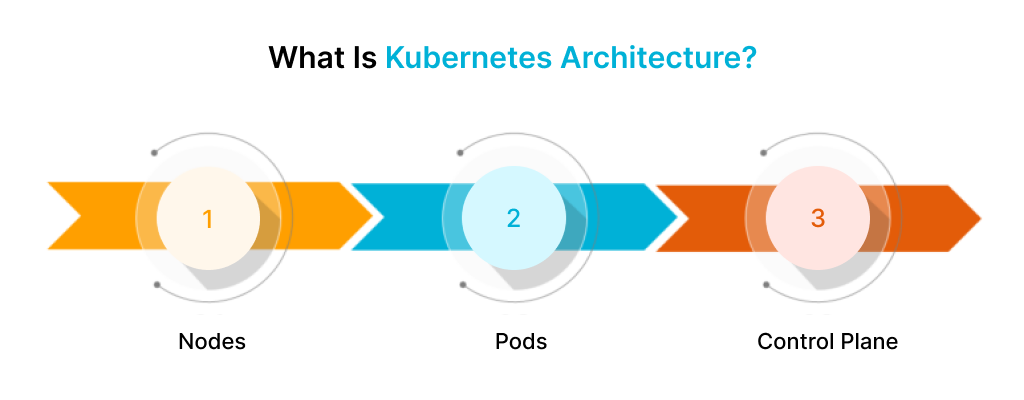

What Is Kubernetes Architecture?

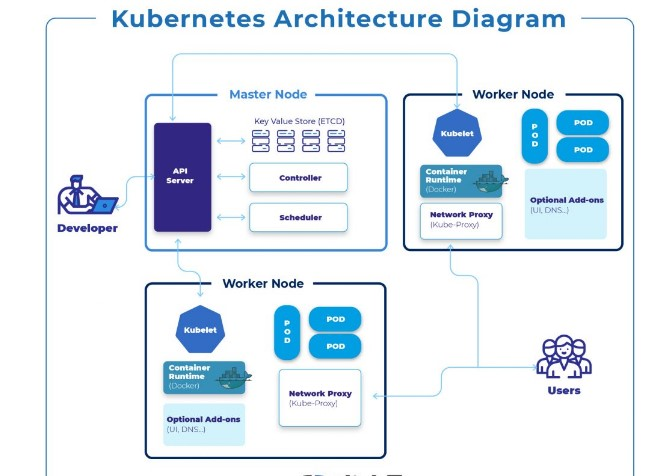

The Kubernetes architecture is highly modular with a strict following to the master-worker model. The master, also known as the control plane, is the one that manages the worker nodes. At the same time, containers are executed and deployed in the worker nodes. These nodes either have physical servers or virtual servers.

When a developer deploys this container orchestration platform, it sets up a cluster—a collection of machines that work together to manage containerized applications. Here’s a breakdown of the essential components within a Kubernetes cluster:

1. Nodes

Nodes are either virtual or physical machines that execute workloads. Each node hosts containers and provides the necessary services for running pods.

Key components on nodes include:

- Container Runtime: Responsible for executing containers.

- Kubelet: An agent running on each node, ensuring pod containers are healthy.

- Kube-proxy: Manages network rules for communication between pods within and outside the cluster.

2. Pods

Pods are the smallest deployable units in Kubernetes. It can contain one or more tightly coupled containers. Two common ways to use pods include

- Single-Container Pods: A pod wraps around a single container, allowing Kubernetes to manage the pod as a whole.

- Multi-Container Pods: These encapsulate applications with multiple containers that share resources.

3. Control Plane

The Control Plane maintains the desired state of the Kubernetes cluster. Its components include:

- Kube API Server: Exposes the Kubernetes API and serves as the control plane’s front end.

- etcd: A high-availability key-value store storing cluster data.

- Kube-scheduler: Assigns newly created pods to nodes based on resource requirements and policies.

Kube Controller Manager: Manages various controllers, including

- Node Controller: Detects and responds when nodes go down.

- Job Controller: Creates pods for one-time tasks.

- Endpoints Controller: Connects services and pods.

- Service Account and Token Controller: Creates default accounts and API access tokens.

- Cloud Controller: Integrates cloud-specific logic into the control plane for native cloud provider APIs.

Here’s a Kubernetes Diagram to help you understand how it works:

Kubernetes Components Overview

Kubernetes components have two main elements: the control plane and the data plane (also known as nodes). The control plane is responsible for managing the Kubernetes cluster, while the data plane comprises the machines used as compute resources. Let’s break down each component of this container orchestration platform to help you understand the concept more precisely.

Kubernetes Control Plane

The control plane is a collection of processes that manage the state of the Kubernetes cluster. It receives information about cluster activity and requests, and uses this information to move the cluster resources to the desired state. The control plane uses kubelet, the node’s agent, to interact with individual cluster nodes.

The main components of the Kubernetes control plane are:

1. API Server

The API Server is the very first thing you will come across in the Kubernetes control plane. It handles external and internal requests, determines their validity, and processes them. The API can be accessed through the kubectl command-line interface, other tools like kubeadm, and REST calls.

2. Scheduler

The Scheduler is responsible for scheduling pods on specific nodes based on automated workflows and user-defined conditions, such as resource requests, affinity, taints, tolerations, priority, and persistent volumes.

3. Kubernetes Controller Manager

The Kubernetes Controller Manager is a control loop that monitors and regulates the state of the cluster. It receives information about the current state of the cluster and objects within it and sends instructions to move the cluster towards the desired state. The Controller Manager manages and controls several controllers at the cluster or pod level that handle automated activities.

4. etcd

etcd is a fault-tolerant and distributed key-value database that stores data about the cluster state and configuration.

5. Cloud Controller Manager

The Cloud Controller Manager embeds cloud-specific control logic, enabling the Kubernetes cluster to connect with the API of a cloud provider. It helps separate the cluster from other components that interact with a cloud platform so that elements inside the cluster do not need to be aware of the implementation specifics of each cloud provider.

Ready to bring your B2B portal or app idea to life?

Kubernetes Core Components: Worker Nodes

6. Nodes

Nodes can be understood as physical or virtual machines that can operate pods as part of a Kubernetes cluster. A cluster can scale up to 5000 nodes, and you can add more nodes to scale the cluster’s capacity.

7. Pods

A pod is the smallest unit in the Kubernetes object model, serving as a single application instance. Each pod consists of one or more tightly coupled containers and configurations that govern how the containers should run.

8. Container Runtime Engine

Each node has a container runtime engine responsible for running containers. This container orchestration platform supports various container runtime engines, including Docker, CRI-O, and rkt.

9. kubelet

The kubelet is a small application on each node that communicates with the Kubernetes control plane. It is responsible for ensuring that containers specified in the pod configuration are running on a specific node and managing their lifecycle.

Want to Hire Website developers for your Project?

10. kube-proxy

The kube-proxy is a network proxy that facilitates Kubernetes networking services. It handles all network communications from the inside and the outside of the cluster by forwarding the traffic or replying to the packet filtering layer of the operating system.

11. Container Networking

Container networking plays a crucial role in enabling communication between containers within a cluster. It ensures that containers can talk to each other, as well as to external hosts. One of the key technologies facilitating this connectivity is the Container Networking Interface (CNI).

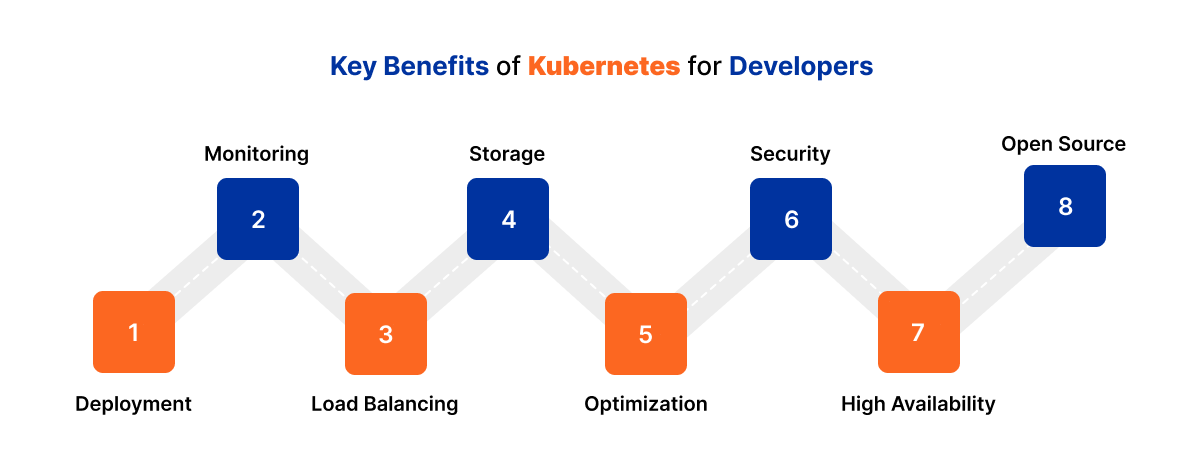

Key Benefits of Kubernetes for Developers

Kubernetes, as an open-source container orchestration platform, allows users to manage and automate containerized apps.

Let’s break down the key benefits:

Deployment

Kubernetes allows users to define and maintain the desired state of containerized applications. It handles tasks like creating new container instances, migrating existing ones, and removing outdated containers. DevOps teams can set policies for automation, scalability, and app resiliency, facilitating rapid code deployment.

Monitoring

This container orchestration platform continuously monitors container health. It automatically restarts failed containers and removes unresponsive ones, ensuring application reliability.

Load Balancing

By distributing traffic across multiple container instances, Kubernetes optimizes resource utilization and improves application performance.

Storage

This container orchestration platform supports various storage types, from local storage to cloud resources, making it flexible for different application needs.

Optimization

The platform intelligently allocates resources by identifying available worker nodes and matching container requirements. This resource optimization enhances efficiency.

Security

Kubernetes manages sensitive information like passwords, tokens, and SSH keys. It ensures secure communication and access control.

High Availability

Failed containers are automatically restarted, and healthy nodes are utilized for rescheduling, ensuring uninterrupted service availability.

Open Source

Kubernetes benefits from a vibrant community of developers and organizations. Its extensibility allows users to customize and enhance functionality as needed.

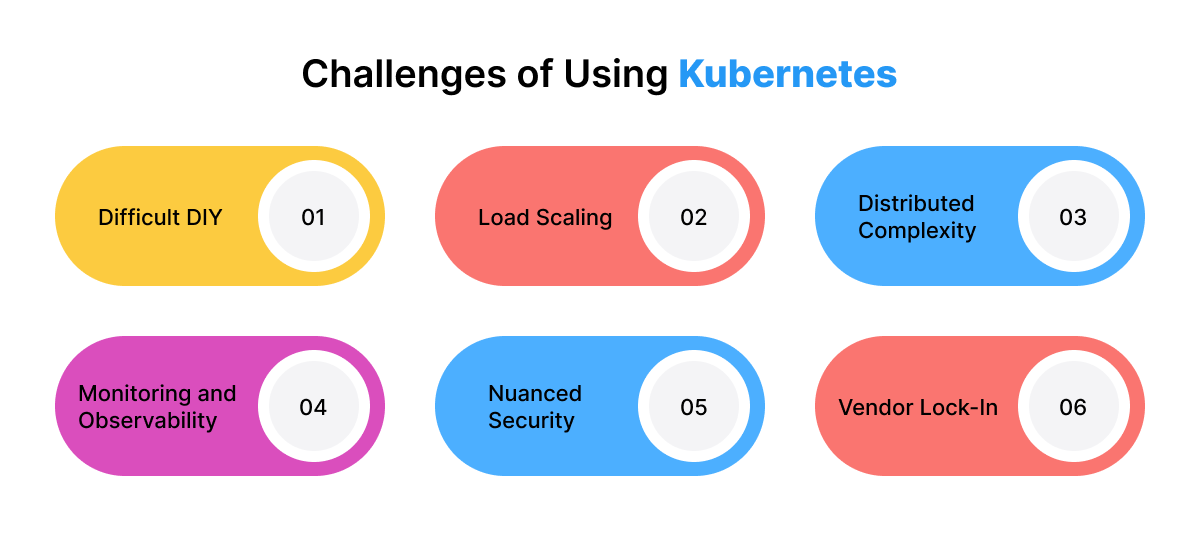

Challenges of Using Kubernetes

Using Kubernetes poses several challenges, including

Difficult DIY

Some organizations prefer to manage open-source Kubernetes themselves, leveraging their skilled staff and resources. However, many opt for services from the broader ecosystem to simplify deployment and management.

Load Scaling

Containerized application components may scale differently or not at all under load. Balancing pods and nodes is essential to ensure efficient scaling.

Distributed Complexity

While distributing app components in containers allows flexible scaling, excessive distribution can increase complexity, affecting network latency and availability.

Monitoring and Observability

As container deployment grows, understanding what happens behind the scenes becomes challenging. Monitoring various layers of the Kubernetes stack is crucial for performance and security.

Nuanced Security

Deploying containers in production introduces multiple security layers, including vulnerability analysis, multifactor authentication, and stateless configuration handling. Proper configuration and access controls are vital.

Vendor Lock-In

Managed Kubernetes services from cloud providers can lead to vendor lock-in. Migrating between services or managing multi-cloud deployments can be complex.

Best Practices for Kubernetes Clusters Architecture

Here are some of the best practices for architecting effective clusters based on Gartner’s recommendations:

- Keep Kubernetes Versions Updated: Regularly update to the latest version. New releases often include important security patches, bug fixes, and performance improvements.

- Invest in Team Education: Prioritize training for both the developer and operations teams. A well-informed team can better manage clusters and address challenges effectively.

- Standardize Integration and Governance: Establish enterprise-wide governance standards to ensure that all tools and vendors align properly with Kubernetes. Consistent integration practices enhance efficiency and security.

- Scan Container Images: Integrate image scanners into your CI/CD pipeline. Scan images during both build and run cycles. Be cautious with open-source code from GitHub repositories to avoid potential security risks.

- Control Access with RBAC: Implement role-based access control (RBAC) across all clusters. Enforce the least privilege principle and adopt zero-trust models to enhance security.

- User Restrictions: For secure containers, use non-root users and set file systems to read-only mode. This minimizes potential vulnerabilities.

- Opt for Minimal Base Images: Start with clean, lean code when building container images. Basic Docker Hub images may contain unnecessary code or even malware. Smaller images build faster and consume less disk space.

- Simplify Containers: Set up one process per container. This simplifies orchestration and allows better monitoring of process health.

- Use Descriptive Labels: Label clusters and components clearly. Descriptive labels help other developers and relevant parties understand the structure and workflows within the cluster.

- Avoid Over-Granularity: Not every function within a logical code component needs to be a separate microservice. Balance granularity to avoid unnecessary complexity.

- Automate CI/CD Pipelines: Automate Kubernetes deployments to reduce manual intervention. An automated pipeline streamlines the deployment process.

Conclusion

After reading this comprehensive guide, you might have understood how Kubernetes has revolutionized cloud-native application management by orchestrating containers and Microservices, tailored for DevOps deployments.

Its foundation enables seamless scalability and automation, essential for managing pods across nodes efficiently. Numerous businesses have started to embrace this container orchestration platform to expedite the deployment of robust, scalable applications, minimizing technical debt compared to traditional monolithic approaches.

As Kubernetes adoption grows, the need for advanced development tools, lifecycle management solutions, and robust cloud-native application security will rise significantly.

FAQs

Is Kubernetes a Docker?

No, Kubernetes and Docker serve different purposes. Docker is a container runtime technology that allows you to build, test, and deploy applications in containers. On the other hand, Kubernetes is a container orchestration tool that helps manage, coordinate, and schedule containers at scale.

What is Kubernetes Tool Used for?

It is an open-source container management tool that automates container deployment, scaling, descaling, and load balancing. It provides a platform for running containerized applications, making it easier to manage distributed containerized apps across clusters of servers.

Is Kubernetes Cloud or DevOps?

It is not strictly categorized as either Cloud or DevOps. It is an orchestration tool that facilitates container management. While it is often used in DevOps practices, it doesn’t directly provision hardware (like Cloud services) but focuses on managing containers.

Can I Use Docker without Kubernetes?

Yes, you can use Docker without Kubernetes. Docker is a standalone software designed to run containerized applications. It allows you to build, package, and manage containers independently. However, Kubernetes enhances container management and scalability.

Is Kubernetes Similar to Azure?

Kubernetes and Azure are not the same, but are related. Azure Kubernetes Service (AKS) is Microsoft’s managed solution. Kubernetes is an open-source container orchestration platform, while AKS provides a managed environment for deploying and managing containerized apps on Azure.

What is a Kubernetes Secret?

A Kubernetes Secret is an object that stores sensitive information, such as passwords, API keys, and tokens. Secrets are used to securely manage and distribute confidential data to pods within a cluster.